|

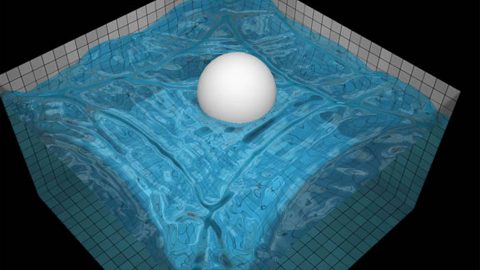

9/3/2023 0 Comments Webgl water simulation

In comments and naming in the code I'm following the description in Bridson's book. Using Preconditioned Conjugate Gradient solver for solving the poisson pressure equation (PPE). Introducing some "sloppiness" in the spatial ordering of the cells allows to do this in a single pass (for details refer to the particle binning shader code). Strict sorting is not necessary, so it's done by counting all particles in each cell and then summing all cells up, creating prefix sums in each cell. To counteract this, particles are binned to cells every n steps. memory & spatial location diverge), resulting in slower and slower volume sampling & transfer over time. Particles get scattered over time in memory (i.e. Doing a shared memory optimization yielded >4x performance speed up:Įvery thread walks only a single linked list, stores the result to shared memory and then reads the remaining seven neighbor linked lists from shared memory. Note that by far the biggest bottleneck in this approach is walking the particle linked list. I eventually settled with three different grids, processing a single velocity component at a time. Generally, one can either stick with a single linked list grid (sampling 6 different but overlapping cells per velocity component) or three different linked-list grids.

It's widespread in CFD since collocated grids are required for arbitrary meshes, but it's hard to find any resources in the computer graphics community ) (to avoid artifacts with collocated grids, Rhie-Chow interpolation is required. a lot slower.Īfter various tries with collocated grids I ended up using staggered after all (for some details see #14) since I couldn't get the collocated case quite right. Note that this all makes MAC/staggered grids a lot less appealing since the volume in which particles need to be accumulated gets bigger & more complicated, i.e. (this makes this a sort of hybrid between naive scatter and gather) Then, every velocity grid cell walks 8 neighboring linked lists to gather velocity. Particles form a linked list by putting their index with a atomic exchange operation in a "linked list head pointer grid" which is a grid dual to the main velocity volume. In Blub I tried a (to my knowledge) new variant of the gather approach: Note though that today atomic floats addition is pretty much only available in CUDA and OpenGL/ Vulkan (using extensions which are only supported on Nvidia) and subgroup operations are not available in wgpu as of writing. inter warp/wavefront shuffles) and atomics. There's some very clever ideas on how to do efficient scattering in Ming et al 2018, GPU Optimization of Material Point Methods using subgroup operations (i.e. Transferring the particle's velocity to the grid is tricky & costly to do in parallel!Įither, velocities are scattered by doing 8 atomic adds for every particle to surrounding grid cells, or grid cells traverse all neighboring cells. Noted down a few interesting implementation details here. Implements APIC, SIGGRAPH 2015, Jiang et al., The Affine Particle-In-Cell Method and IEEE Transactions on Visualization and Computer Graphics 2019, Kugelstadt et al., Implicit Density Projection for Volume Conserving Liquids on GPU To learn more about fluid simulation in general, check out my Gist on CFD where I gathered a lot of resources on the topic.

various other amazing crates, check cargo.toml file.as of writing all this is still in heavy development, so I'm using some master version, updated in irregular intervals.Simple json format where I dump various properties that I think are either too hard/annoying to set via UI at all or I'd like to have saved.Ĭan be reloaded at runtime and will pick up any change Major Dependencies (on failure it will keep using the previously loaded shader) "Scenes" Shaders are hot reloaded on change, have fun! computing signed distance field (happens brute force on gpu).Should work on Linux/Windows - I'm developing on Windows, so things might break at random for the others.ĭoing release mode ( cargo run -release) can be significantly faster.įirst time loading any scene/background is a bit slower since some of the pre-computations are cached on disk. Note that there are a few extra dependencies due to the shaderc, if your build fails check shaderc-rs' build instructions. Requires git-lfs (for large textures & meshes). I gave a talk about it at the Rust Graphics Meetup:įor SPH (pure lagrangian) fluid simulation, check out my simple 2D DFSPH fluid simulator, YASPH2D. Experimenting with GPU driven 3D fluid simulation on the GPU using wgpu.įocusing on hybrid lagrangian/eularian approaches here (PIC/FLIP/APIC.).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed